FastEddy®: Not a Pool Hall Hustler

A GPU-Accelerated Microscale Model

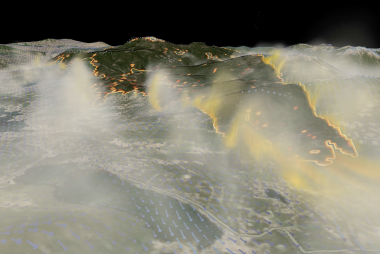

Imagine a wildfire is approaching a densely populated neighborhood. Emergency managers need to decide systematically and quickly on whether families should evacuate or stay put. These first responders need accurate information at both the mesoscale (the region’s weather) and microscale (the weather close to the terrain) levels, to make a timely determination.

Or this unsettling scenario: A toxic gas has been released in a city. Where will the particulates go and how quickly? Will they drift to one particular city block and avoid another? Again, first responders need this fine-scale information quickly and accurately to protect the public.

“This may be the most critical step in achieving a meaningful operational microscale modeling capability down the road.”

- Jeremy Sauer

Day-to-week forecasts, today, are reliable, but only after decades of research, testing and refinement. We count on them, even take them for granted. These forecasts are a testament to the nation’s investment in R&D, and its support in the development and operational use of the nation’s superior numerical weather prediction computer models.

Capturing turbulence at a finer scale, however, has proven more challenging due to the substantial computational expense, preventing current state-of-the-art numerical weather prediction (NWP) models from producing these high-resolution forecasts in real time.

Microscale modeling is finally catching up

A new model being developed at NCAR will, quite simply, do fine-scale modeling better and faster. How? Scientists are developing a new Large-Eddy Simulation (LES) model on a Graphics Processing Unit (GPU), to allow for a much faster generation of simulation data sets.

To back up, let’s define these terms.

Graphics Processing Unit (GPU). GPU is the video card that’s inside any kind of desktop unit and it’s made to be very fast to create any kind of computer image or animation visualization. Many scientific codes, not just those used for meteorology, use GPUs. And there’s a reason why: they hold so much promise in terms of speeding up calculations. GPU acceleration has become popular in high-performance computing as supercomputers doing big simulations require a significant amount of power consumption. They have shown that with a lot less power they can get a lot more computing done – quite a savings to institutions watching their bottom line.

Large Eddy Simulation (LES). LES is a particular type of meteorology modeling at a finer spatial resolution (microscale) so that most of the important and energetic motions of the atmosphere (or eddies) are explicitly resolved.

The notion of Large-Eddy Simulation has primarily been a research topic on understanding the fundamentals of turbulence in the atmospheric boundary layer, the closest layer to the earth of the earth’s atmosphere. The problem is, LES modeling requires many more data points to represent a small area, thus making it extremely computationally demanding. This explains why it has been restricted to the research realm.

Until Now

FastEddy® is the new, aptly named LES model being developed at NCAR. It’s a GPU-accelerated unit (Fast) Large-Eddy Simulation (Eddy).

Sponsored by the Department of Defense’s Defense Threat Reduction Agency (DTRA), the original purpose of this project was to develop and add fine-scale accelerated modeling into first responder training curriculum in plume atmospheric transport and dispersion (T&D). NCAR has had a long and productive relationship with DTRA in T&D modeling, so the partnership was natural. The goal for the developers of this model was to meet the performance requirements without having to rely on using a supercomputer.

When this project started in 2017, the decision was made to develop FastEddy from scratch. The developers could have pulled original codes from various programs, but the specter of potential problems porting it to a GPU architecture led them to make a clean start. This took more time, but this meticulous approach avoided the potential for problems to arise that would preclude being able to exploit GPU acceleration. Eighteen months later, the developers had a new, clean product. While it’s still early, vetting and validation come next, developers are enthusiastic. Said scientist and FastEddy developer Jeremy Sauer, “We’re at a point where we can say, ‘here's a tool that will help change the game for the end user down the road. We can say that with some confidence and we really believe that we’re giving our current sponsor the best solution [to their particular challenge] possible.”

FastEddy: Saving Money, Power and Time

In preliminary simulations of idealized atmospheric boundary layer scenarios, single-GPU FastEddy results and performance were roughly equivalent to identically configured Weather Research and Forecast (WRF) model simulations running on 720 cores of CPU. Procurement costs of $4,000-$6,000 for a GPU compared to $80,000-$100,000 for the 720 cores is a big win, but the power consumption savings of GPU versus so many CPUs is at least a factor of 10, making GPU-accelerated modeling even more cost-effective.

FastEddy: Research, Educational and Operational Potential

FastEddy has shown how effective this method is in capturing the influence of turbulence across a plethora of potential application scenarios. According to Sauer, academia would be an ideal prototype environment to learn and test FastEddy. Such an environment would benefit both the trainers and trainees. They will discover, using such a fully dynamic and evolving paradigm, how they can best utilize it for their purposes.

This LES modeling method will also be a viable tool for microscale operational, educational, and more comprehensive research applications. NCAR’s Research and Applications Lab, in fact, is poised to exploit the application of fine-scale models to address needs such as:

- Optimally siting wind turbines, wind farm power production forecasts, and assessing wind resources in complex terrain;

- Calculating the building-aware Transport and Diffusion (T&D) of chemical, biological, or radiological material in the atmosphere, in response to an accidental or intentional release;

- Assessing the risk to populations from potential leaks of toxic material from industrial or transportation facilities; and

- Calculating the transport of air (infiltration and exfiltration) between indoor airspaces and the atmosphere outdoors.

- Providing boundary layer turbulence characterizations to operational systems for unmanned aerial systems.

FastEddy is meant to complement and be combined with mesoscale NWP models. Such a meso-microscale marriage would open up many more research and application opportunities.

“The time is ripe to take advantage of these accelerated computing platforms to start looking at a place where we couldn't look before because it was so computationally intensive,” Sauer concluded.